The 2022 NVIDIA Global Technology Conference (GTC) featured announcements by the company on hardware and software to enable the next generation of AI applications with a focus on Digital Twins, realistic physics driven models for virtual simulations (powering what NVIDIA called the Omniverse) and real time AI applications such as advanced driving assistance.

There were several hardware and software announcements at the event and during Jensen Huang’s keynote presentation he went into some of the drivers for some of these announcements. The slide below, near the start of the keynote gives a view of all the topics discussed in the keynote, and covered in more detail in GTC sessions.

He discussed the need to increase computational complexity by 1M times in order to create massive models that can do global climate modeling. NVIDIA Modulus for scientific digital twin modelling can perform physis-ML accelerated digital twins using a transformer-based model that can be trained in lower resolution data and make inferences with higher resolution. He said that this approach can be 45,000 X faster than some other modeling approaches.

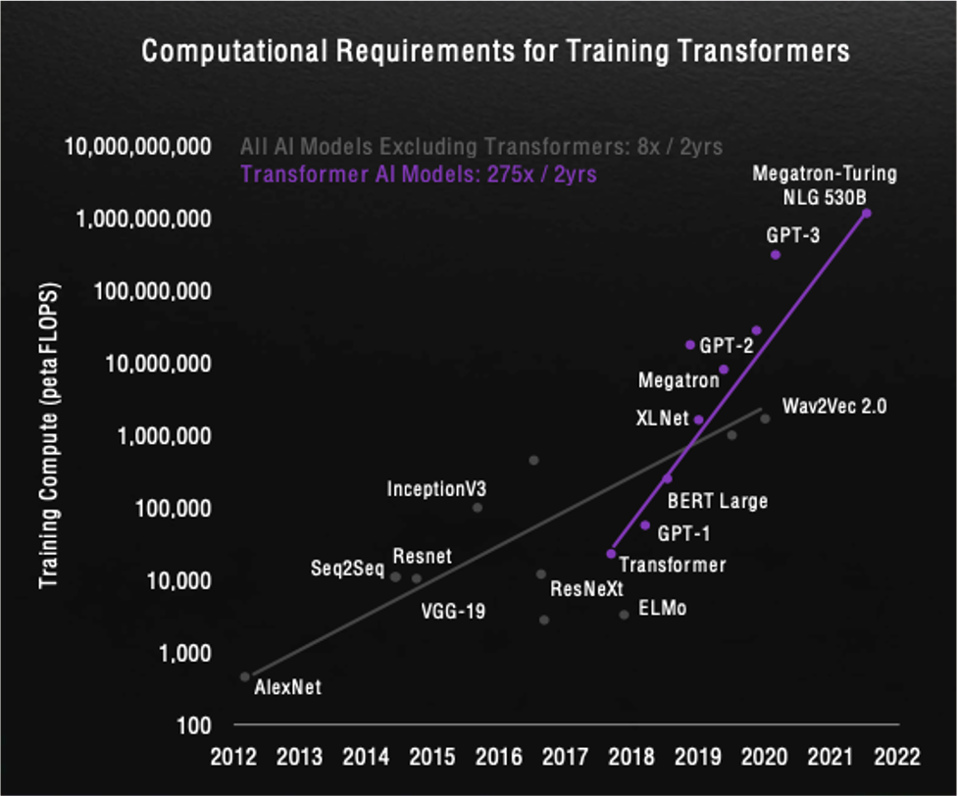

A fairly recent concept for some AI applications is Transformers (introduced in 2017). Transformers run their training so that every element in the input data connects to every other element. This allows a transformer trained model to see traces of the entire data set as soon as its starts its training. As a consequence of the density of connections between the data in a transformer trained model the computation training capability swells. The slide below from Jensen’s talk shows the increasing demand for transformer training compared to earlier AI training models.

Transformers are especially useful for problems, such as natural language training and computer vision and may be useful for many other AI applications. This will drive the demand for AI training processing power. In addition to training, AI inference is also making significant advances. NVIDIA’s Triton inference server software allows model deployment and execution for AI applications. NVIDIA AI Software Development Kits (SDKs) include Riva 2.0 for speech AI and Maxine for AI Video Conferencing. AI Frameworks include Merlin 1.0 for hyperscale recommender systems and Nemo megatron for training large language models.

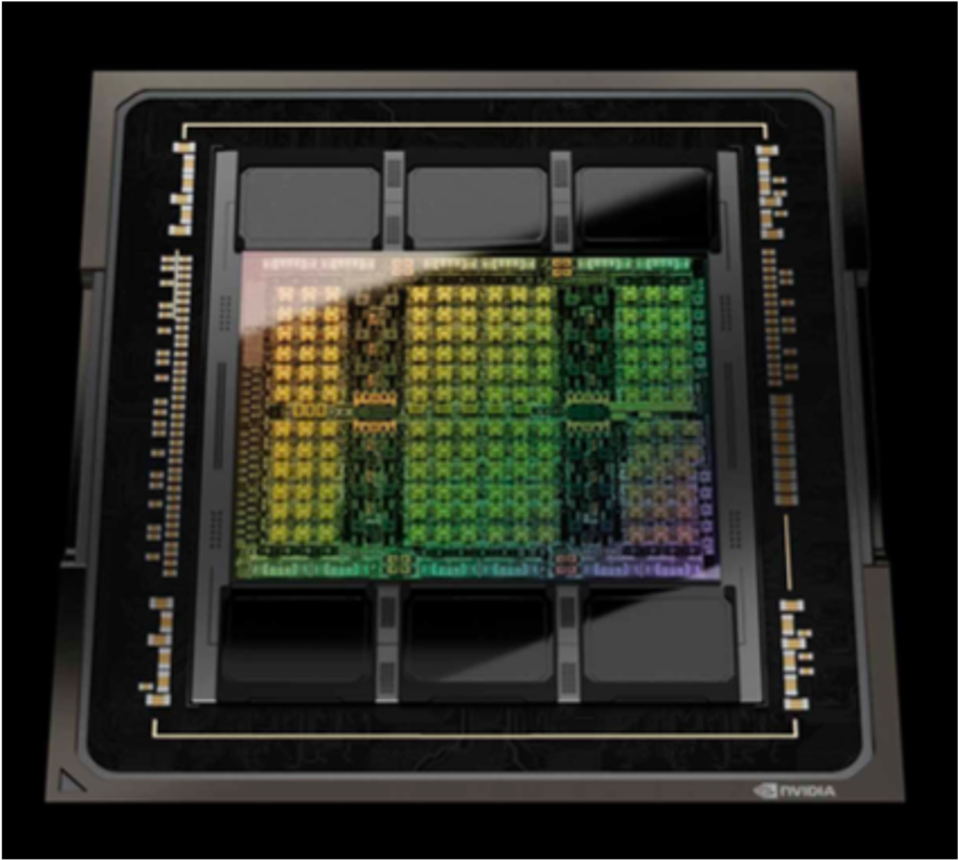

NVIDIA introduced its H100 Tensor Core GPU, shown below. The H100 has 80B transistors, and provides 4.9TB/s bandwidth using a 4N TSMC process. The H100 increases transformer performance by 6X, allows secure data and AI models with up to 7X more secure supported tenants and includes a 4thgeneration NVLink providing 7X the performance of PCIE Gen5.

The H100 is available in a HGX H100 configuration or as the DGX H100 shown below. The DGX H100 has H100 GPUs capable of 32 PFLOPS with 640GB of HBM3 Memory and 24TB/s memory bandwidth.

A DGX POD (multi-rack unit) with and NVLink Switch supports 20.5TB of HBM3 memory and 768TB/s memory bandwidth. 18 DGX PODs significant aggregate performance with FP64 operating at 275PFLOPS and bisection bandwidth of 230TB/s. Compared to the A100 the H100 is 6X faster training on the GPT-3 model and provides 30X throughput improvement for inference at a one second response. The H100 CNX includes and H100 and CX-7 SmartNIC. NVIDIA is providing H100 products at many scales, as shown below.

NVIDIA also introduced its Grace Hopper Superchip that combined with the H100 and with a NVLink Chip to Chip coherent interface provides up to 900GB/s. The NVLink chip two chip interconnect allows a number of system architectures. NVIDIA sees NVLink used in a number of semi-custom chips and systems.

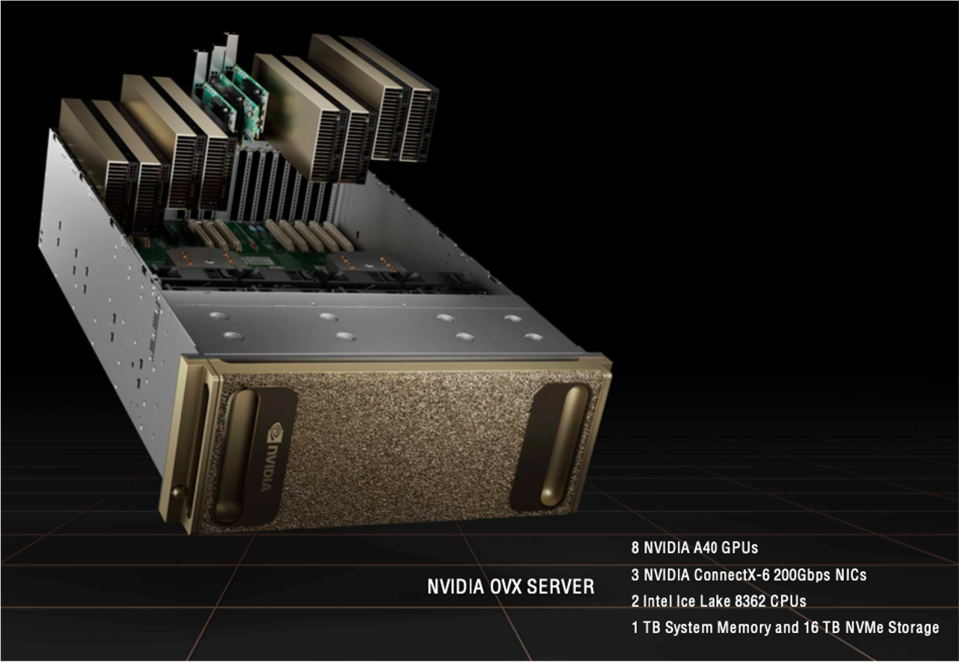

Jensen also talked extensively about Omniverse digital twins for enabling the next wave of AI including robot computer aided design (CAD), factor CAD, factory planning, digital human training and a robot gym for robot training as well as physics and path tracing applications. The company’s OVX Servers are designed for Omniverse applications. One of these servers is shown below, supporting 1TB of memory and 16TB of NVMe storage.

OVX enclosures can also be consolidated into superPODs with remote direct memory access (RDMA) for lowest latency data sharing. These are available from Inspur, Lenovo and Supermicro. Many companies are using NVIDIA Omniverse for digital twins and robotics, systems integration, sensor models, design and content creation, rendering and asset and material libraries. The company also announced the Spectrum-4 400G ethernet switch with 51.2T bandwidth and 100G SerDes and 128 ports of 400GbE.

The figure below shows various software and target applications in the NVIDIA Omniverse.

NVIDIA drive ORIN is being used on BYD electric vehicles. The Holoscan technology is being used in health care and diagnostic application such as a lightsheet microscope and robotic medical devices. Isaac is being used in many commercial robotic systems. Metropolis is being used for factory robotics and automation (AWS uses it for their warehouse distribution systems). Omniverse Cloud provides remote design collaboration and can even include collaboration with digital entities.

As can be seen, NVIDIA applications process a lot of data and their hardware includes substantial memory and storage. In addition to the memory and storage in NVIDIA hardware they also have partnerships with several digital storage companies as well as companies using a lot of digital storage and memory in their hardware. We will talk about products from VAST Data, DDN and Inspur below.

VAST Data introduced its Ceres storage enclosure in a 1RU form factor using E1.L SSDs (its earlier storage systems used U.2 Intel SSDs with 4-bit per cell (QLC) and was contained in 2RU enclosures). Ceres uses four ARM-powered BlueField-1 BF1600 DPUs providing more than 60GB/s network bandwidth. During the GTC NVIDIA showed a rack of Ceres enclosures (39RU of Ceres) used for storage to support its DGX SuperPOD. This is planned to be available by mid-2022.

The raw storage capacity of the Ceres is about 676TB (the same as one of the older configuration capacities). The Ceres likely uses Solidigm D5-P5326 NVMe (PCIe Gen 4) SSD with 144-layer 3D NAND. The earlier VAST storage system used Intel’s Optane SSDs for a write cache buffer.

The Ceres using 6.5TB (8 drives) of 800GB Kioxia FL6 SSDs (800GB capacity and offering higher endurance than conventional NAND SSDs). Note that the earlier VAST used 12 Optane SSDs. VAST says that with data reduction this system provides 2PB of storage per Ceres box. The Ceres is to be manufactured by AIC and Mercury Systems and will serve as data capacity building blocks for VAST’s Universal Storage Clusters.

DDN announced next-generation storage appliances for NVIDIA SuperPODs. The DDN A3I AI400X2 provides 90GB/s and 3M IOPS to an NVIDIA DGX A100 system. These appliances come with 250TB and 500TB of all-NVMe usable storage capacity. The company says that in 2021 it delivered more than 2.5EB of AI, analytics and deep learning flash and hybrid storage solutions for cloud and customer data centers. The image below shows a DDN storage enclosure with NVIDIA DGX servers.

InSpur announced two new servers using NVIDIA hardware. Its MetaEnginer server, targeted at digital twins and virtual world applications has eight NVIDIA A40 GPUs, three NVIDIA ConnectX®-6 Dx 200Gbps SmartNICs, 1TB system memory and 16TB NVMe Storage. It also announced Information AIstation servers using the NVIDIA H100 Tensor Core GPU.

The 2022 NVIDIA GTC introduced many new processing systems and software that will drive AI development and the demand for digital storage and memory to support these applications. These include products from VAST Data, DDN and InSpur.