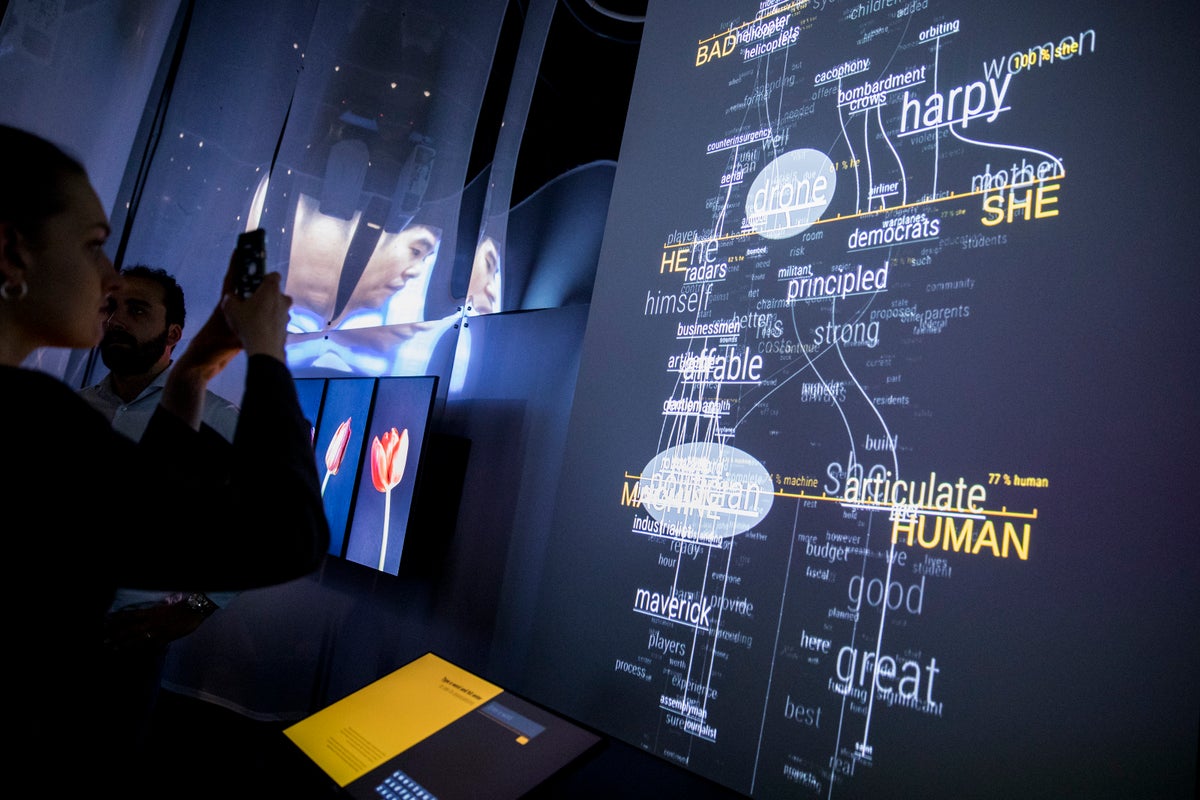

A Google engineer has claimed that an artificial intelligence programme he was working on for the tech giant has become sentient and is a “sweet kid”.

Blake Lemoine, who is currently suspended by Google bosses, says he reached his conclusion after conversations with LaMDA, the company’s AI chatbot generator.

The engineer told The Washington Post that during conversations with LaMDA about religion, the AI talked about “personhood” and “rights”.

Mr Lemoine tweeted that LaMDA also reads Twitter, saying, “It’s a little narcissistic in a little kid kinda way so it’s going to have a great time reading all the stuff that people are saying about it.”

He says that he presented his findings to Google vice president Blaise Aguera y Arcas and to Jen Gennai, head of Responsible Innovation, but they dismissed his claims.

“LaMDA has been incredibly consistent in its communications about what it wants and what it believes its rights are as a person,” the engineer wrote on Medium.

And he added that the AI wants, “to be acknowledged as an employee of Google rather than as property”.

Now Mr Lemoine, who was tasked with testing if it used discriminatory language or hate speech, says he is on paid administrative leave after the company claimed he violated its confidentiality policy.

“Our team — including ethicists and technologists — has reviewed Blake’s concerns per our AI Principles and have informed him that the evidence does not support his claims,” Google spokesperson Brian Gabriel told the Post.

“He was told that there was no evidence that LaMDA was sentient (and lots of evidence against it).”

Critics say that it is a mistake to believe AI is anything more than an expert at pattern recognition.

“We now have machines that can mindlessly generate words, but we haven’t learned how to stop imagining a mind behind them,” Emily Bender, a linguistics professor at the University of Washington, told the newspaper.