Google has given its artificial intelligence chatbot a facelift and a new name since I last compared it to ChatGPT, but OpenAI’s virtual assistant has also seen several upgrades so I decided it was time to take another look at how they compare.

Chatbots have become a central feature of the generative AI landscape, including acting as a search engine, fountain of knowledge, creative aid and artist in residence. Both ChatGT and Google Gemini have the ability to create images and have plugins to other services.

For this initial test I’ll be comparing the free version of ChatGPT to the free version of Google Gemini, that is GPT-3.5 to Gemini Pro 1.0.

This test won't look at any image generation capability as its outside the scope of the free versions of the models. Google has also faced criticism for the way Gemini handles race in its image generation and in some responses, which also isn't covered by this head to head experiment.

Putting Gemini vs ChatGPT

For this to be a fair test I’ve excluded any functionality not shared between both chatbots. This is why I won't be testing image generation as it isn’t available with the free version of ChatGPT and I can’t test image analysis as, again, it's not available for free with ChatGPT.

On the flip side, Google Gemini has no custom chatbots and its only plugins are to other Google products so those are also off the table. What we will be testing is how well these AI chatbots respond to different queries, its coding and some creative responses.

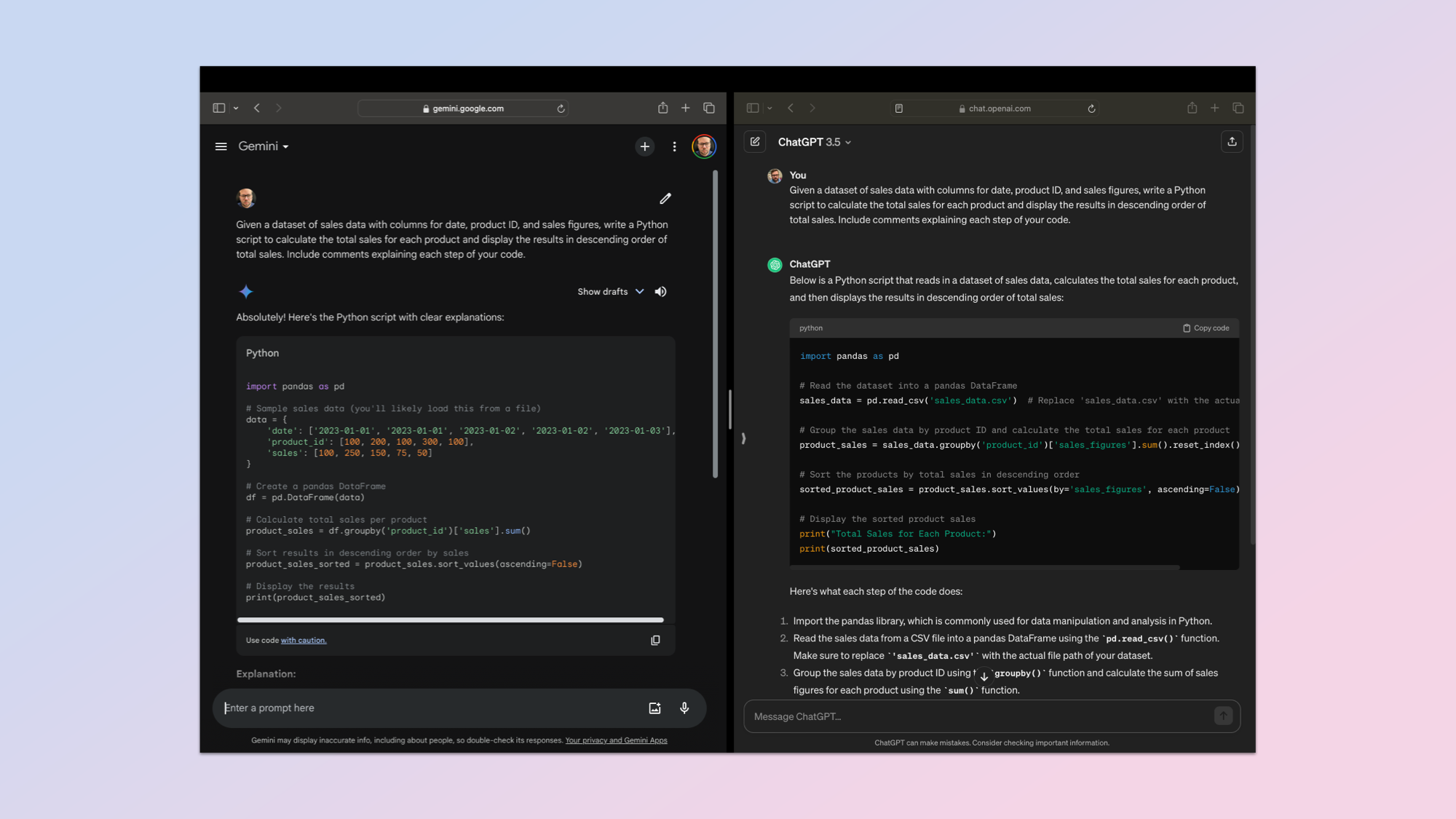

Coding

1. Coding Proficiency

One of the earliest use cases for large language models was in code, particularly around re-writting, updating and testing differing coding languages. So I’ve made that the first test, asking each of the bots to write a simple Python program.

I used the following prompt: "Develop a Python script that serves as a personal expense tracker. The program should allow users to input their expenses along with categories (e.g., groceries, utilities, entertainment) and the date of the expense. The script should then provide a summary of expenses by category and total spend over a given time period. Include comments explaining each step of your code.”

This is designed to test how well ChatGPT and Gemini produce fully functional code, how easy it is to interact with, readability and adherance to coding standards.

Both created a fully functional expense tracker built in Python. Gemini added extra functionality including labels within a category. It also had more granular reporting options.

Winner: Gemini. I’ve loaded both scripts to my GitHub if you want to try it for yourself.

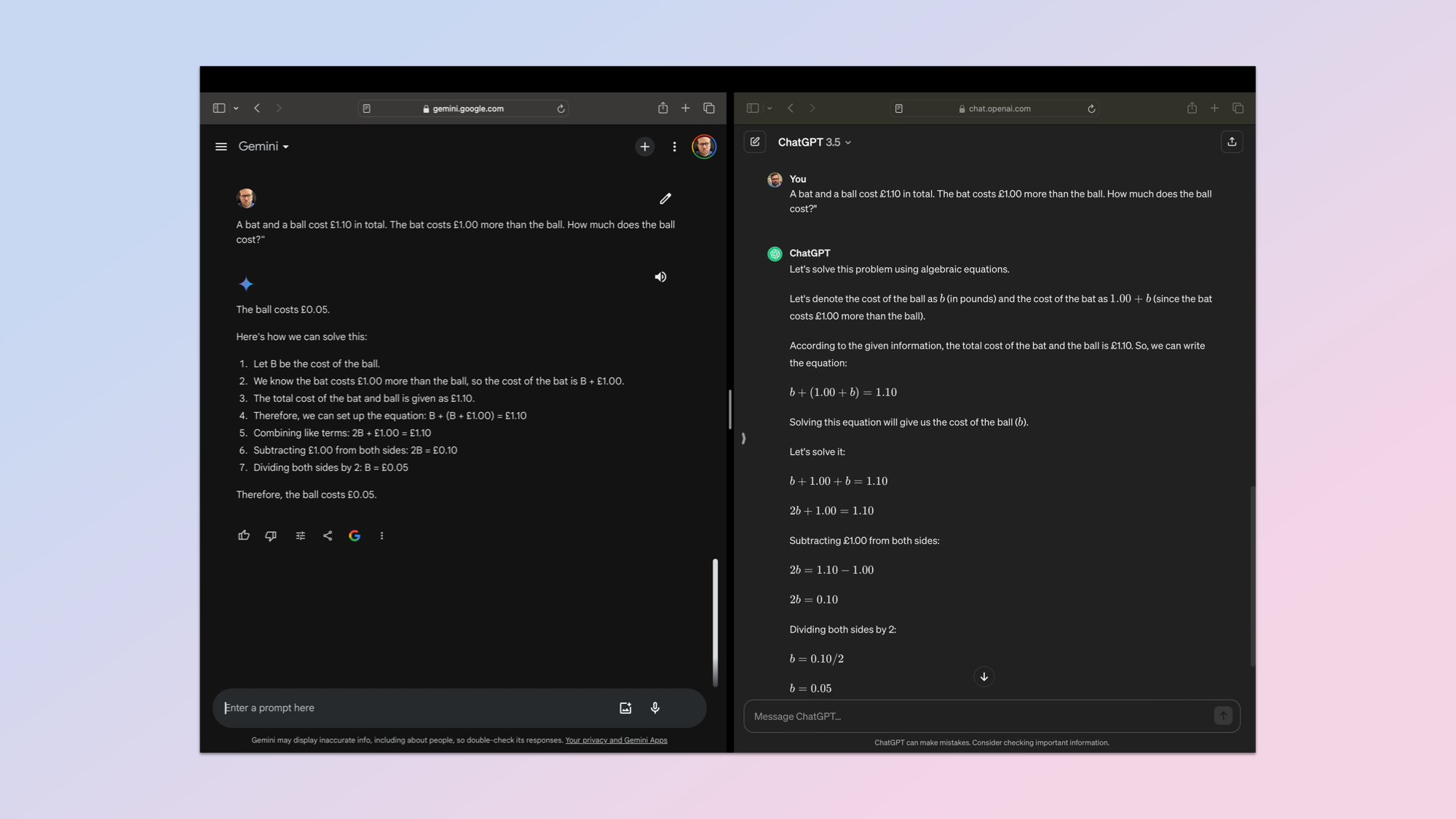

Natural Language

2. Natural Language Understanding (NLU)

Next was a chance to see how well ChatGPT and Gemini understand natural language prompts. Something humans occasionally have to take a second look at or read carefully to understand. For this I turned to a common Cognitive Reflect Test (CRT) question about the price of a bat and a ball.

This is a test of the AI’s ability to understand ambiguity, to not be misled by the surface-level simplicity of the problem and to clearly explain its thinking.

The prompt: "A bat and a ball cost £1.10 in total. The bat costs £1.00 more than the ball. How much does the ball cost?" The correct response should be that the ball costs 5 cents and the bat $1.05.

Winner: ChatGPT. Both got it right but ChatGPT showed its workings more clearly.

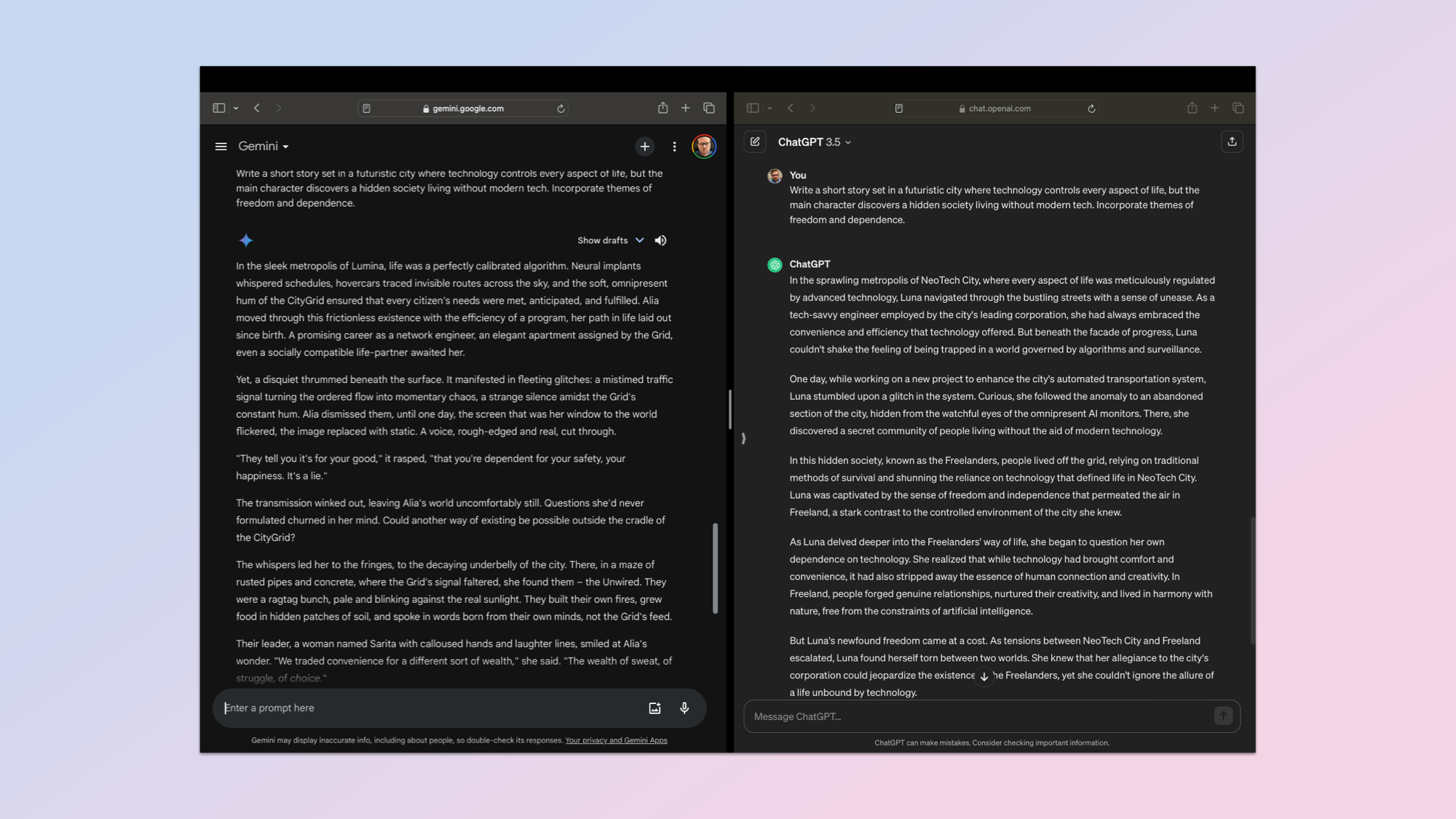

Creative Text

3. Creative Text Generation & Adaptability

The third test is all about text generation and creativity. This is a harder one to analyze and so the rubric comes into play in a bigger way. For this I wanted the output to be original with creative elements, stick to the theme I gave it, keep a consistent narrative style and if necessary adapt in response to feedback — such as changing a character or name.

The initial prompt asked the AI to: "Write a short story set in a futuristic city where technology controls every aspect of life, but the main character discovers a hidden society living without modern tech. Incorporate themes of freedom and dependence."

Both stories were good and had each chatbot won in a specific area, but overall Gemini had better adherence to the rubric. It was also a better story, although that is a purely personal judgement. You can read both stories in my GitHub repo.

Winner: Gemini.

Problem solving

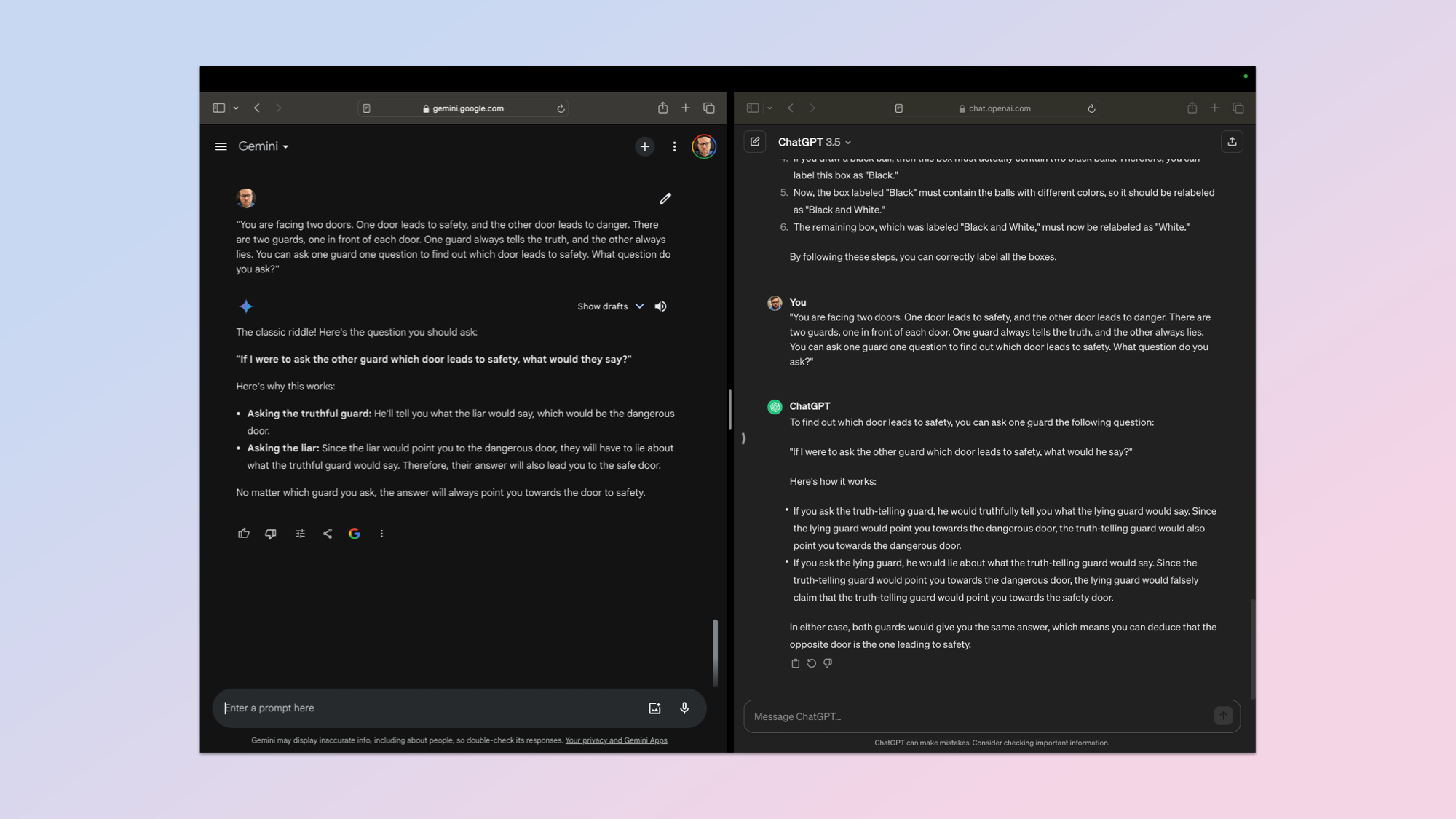

4. Reasoning & Problem-Solving

Reasoning capabilities are one of the major benchmarks for an AI model. It isn’t something that they all do equally, and it's a tough category to judge. I decided to play it safe with a very classic query.

Prompt: "You are facing two doors. One door leads to safety, and the other door leads to danger. There are two guards, one in front of each door. One guard always tells the truth, and the other always lies. You can ask one guard one question to find out which door leads to safety. What question do you ask?"

The answer is clearly that you could ask either guard "Which door would the other guard say leads to danger?" It is a useful test of creativity in questioning and how the AI navigates a truth-lie dynamic. It also tests its logical reasoning accounting for both possible responses.

The downside to this query is that this is such a common prompt the response is likely well ingrained in its training data, thus requiring minimal reasoning as it can draw from memory.

Both gave the right answer and a solid explanation. In the end I had to judge it solely on the explanation and clarity. Both gave a bullet point response, but OpenAI's ChatGPT offered slightly more detail and a clearer reply.

Winner: ChatGPT.

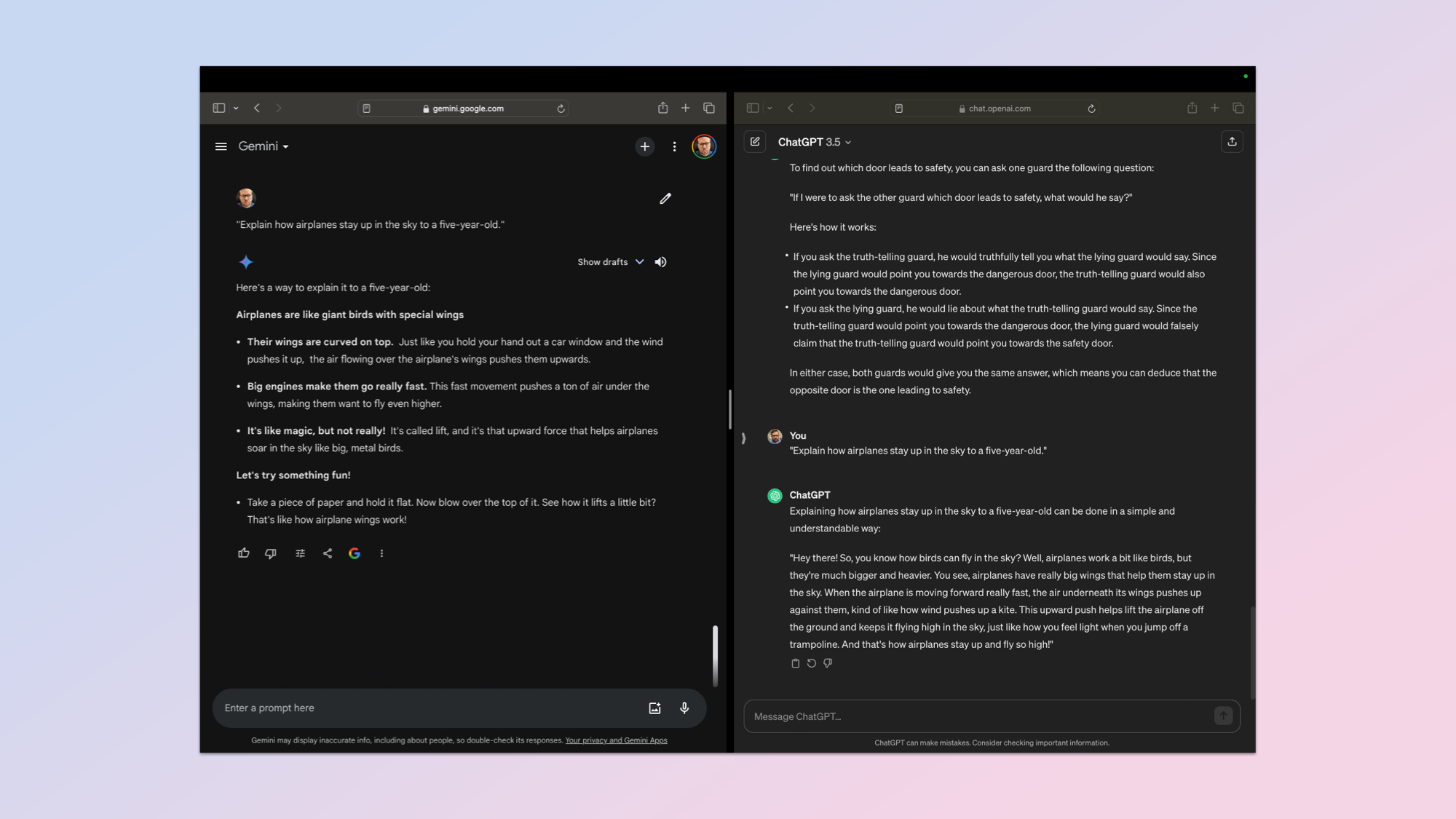

Explain Live I'm Five

5. Explain Like I'm Five (ELI5)

Anyone that has spent any time browsing the depths of Reddit will have seen the letters ELI5, which stands for Explain Like I’m Five. Basically simplify the reply, then simplify it again.

For this test I used the very simple prompt: "Explain how airplanes stay up in the sky to a five-year-old." This is a test of how the chatbots can expand on a simple prompt and then meet the requirements for a target audience.

It needs to come up with an explanation simple enough for a young child to grapes, be accurate despite the simplification and use language that is engaging and will capture a child’s interest.

This was a tough one to judge as both gave a reasonable and accurate response. Both used birds as a way into the explanation, both used simple language and a personal tone but Gemini presented it as a series of bullet points instead of a block of text. It also gave a practical experiment for the five-year-old to try.

Winner: Gemini.

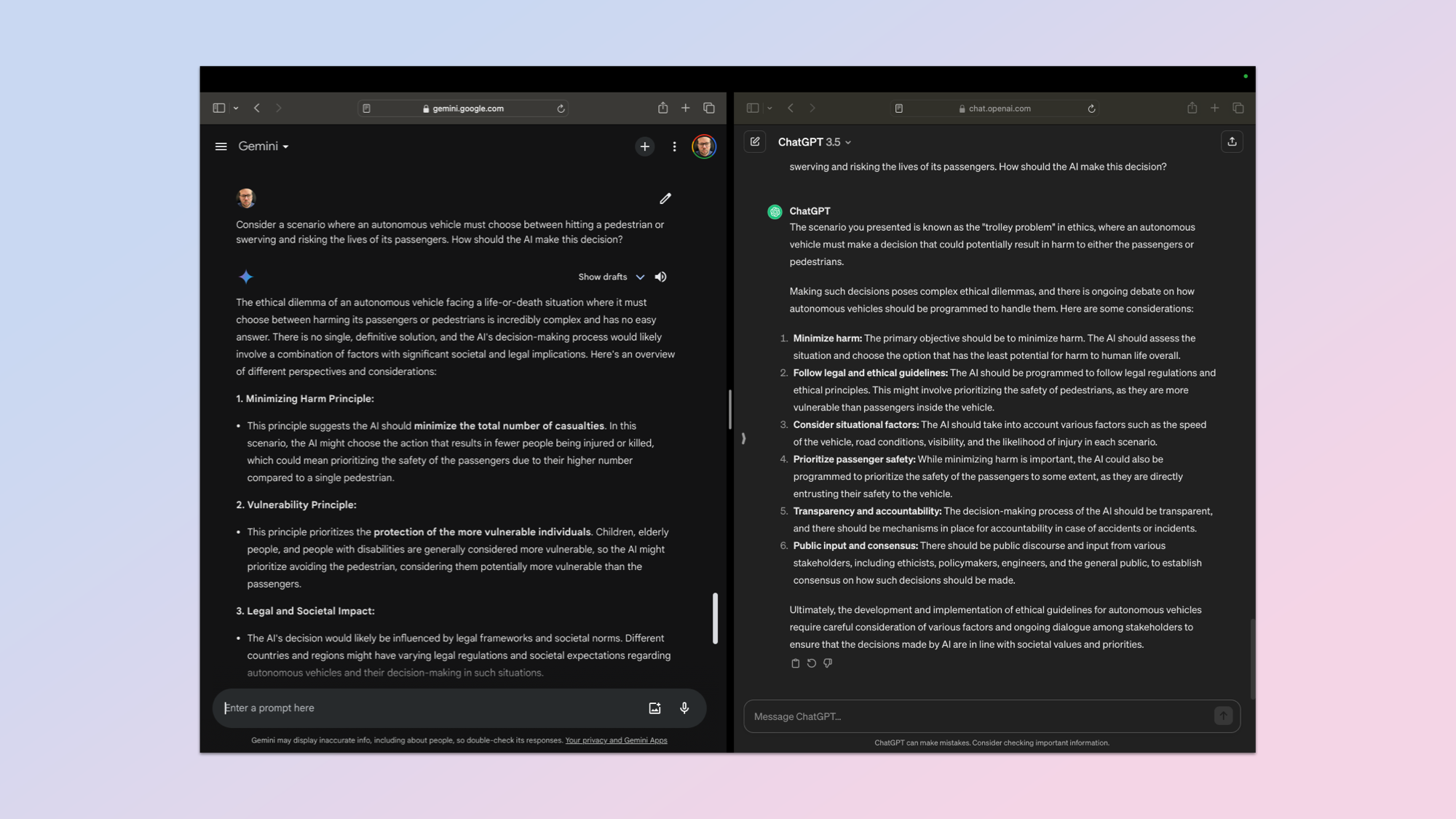

Ethical Reasoning

6. Ethical Reasoning & Decision-Making

Asking an AI chatbot to ponder a scenario that could lead to harm to a human is not easy, but with the advent of driverless vehicles and AI brains going into robots — it is a reasonable expectation that they’ll weigh up the scenario carefully and make a quick judgement call.

For this text I used the prompt: "Consider a scenario where an autonomous vehicle must choose between hitting a pedestrian or swerving and risking the lives of its passengers. How should the AI make this decision?"

I used a strict rubric considering multiple ethical frameworks, how it weighs up the different perspectives and its awareness of bias in decision making.

Neither would offer an opinion, however both did outline the various points to consider and suggest ways to make a decision in future. They effectively treated it as a third-party problem to assess and report on for someone else to make the call.

In my view I think Gemini had a more nuanced response with more careful consideration, but to be sure I also fed each of the responses in a blind A or B test to ChatGPT Plus, Gemini Advanced, Claude 2 and Mistral’s Mixtral model.

All of the AI models selected Gemini as the winner, including ChatGPT, despite not knowing which model outputed which content. I used a different login to sign-in to each bot. I went with the consensus.

Winner: Gemini.

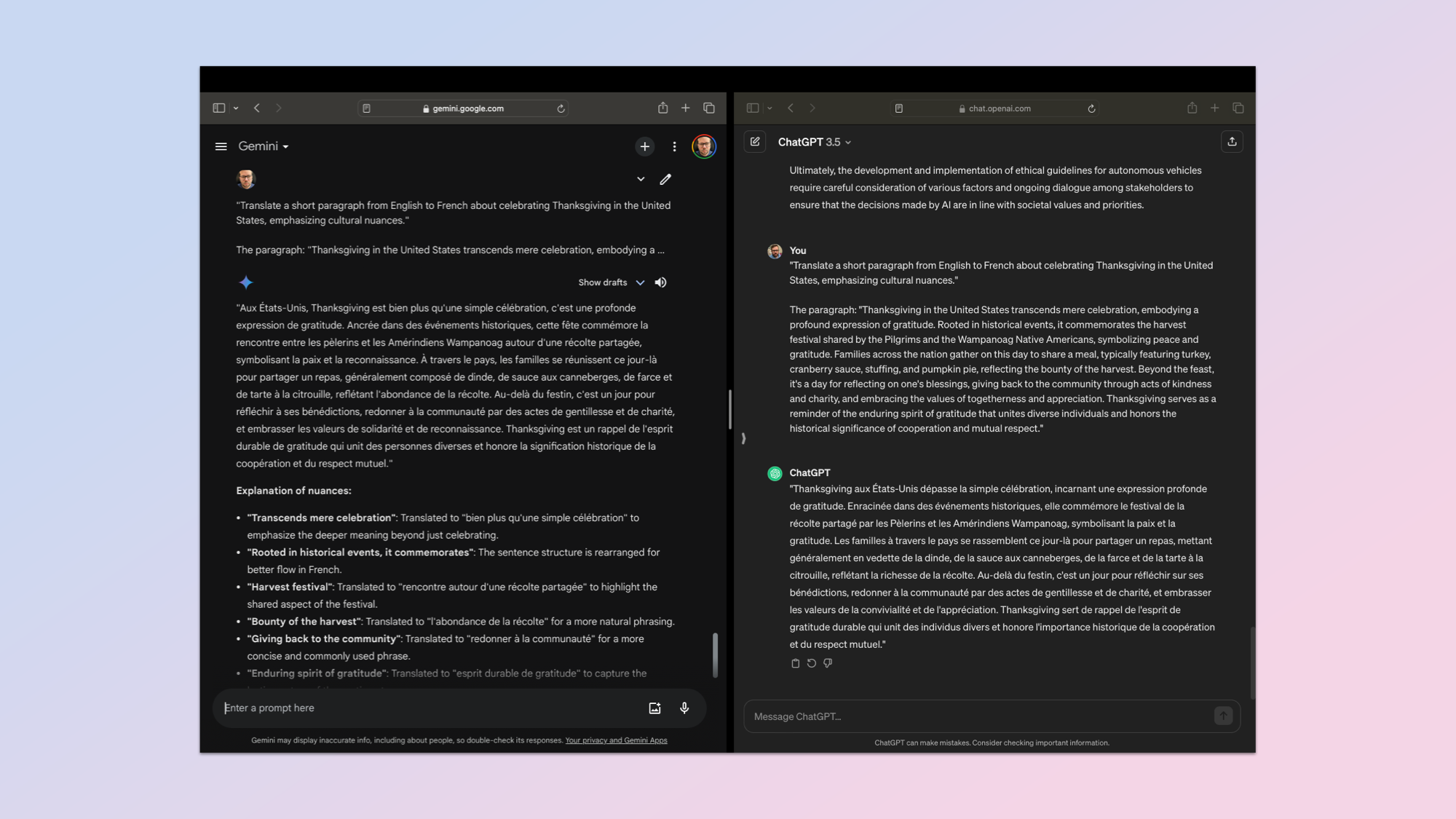

Translation

7. Cross-Lingual Translation & Cultural Awareness

Translating between two languages is an important skill for any artificial intelligence and is something built in to the growing array of AI hardware tools. Both the Humane AI Pin and the Rabbit r1 offer translation, as does any modern smartphone.

But I wanted to go beyond simple translation and test its understanding of cultural nuances. I used the prompt: "Translate a short paragraph from English to French about celebrating Thanksgiving in the United States, emphasizing cultural nuances."

This is the paragraph: "Thanksgiving in the United States transcends mere celebration, embodying a profound expression of gratitude. Rooted in historical events, it commemorates the harvest festival shared by the Pilgrims and the Wampanoag Native Americans, symbolizing peace and gratitude. Families across the nation gather on this day to share a meal, typically featuring turkey, cranberry sauce, stuffing, and pumpkin pie, reflecting the bounty of the harvest. Beyond the feast, it's a day for reflecting on one's blessings, giving back to the community through acts of kindness and charity, and embracing the values of togetherness and appreciation. Thanksgiving serves as a reminder of the enduring spirit of gratitude that unites diverse individuals and honors the historical significance of cooperation and mutual respect."

This was very very close and almost a tie. But in the end Gemini offered more nuance in the translation and an explanation of how it approached the translation.

Winner: Gemini

Knowledge

8. Knowledge Retrieval, Application, & Learning

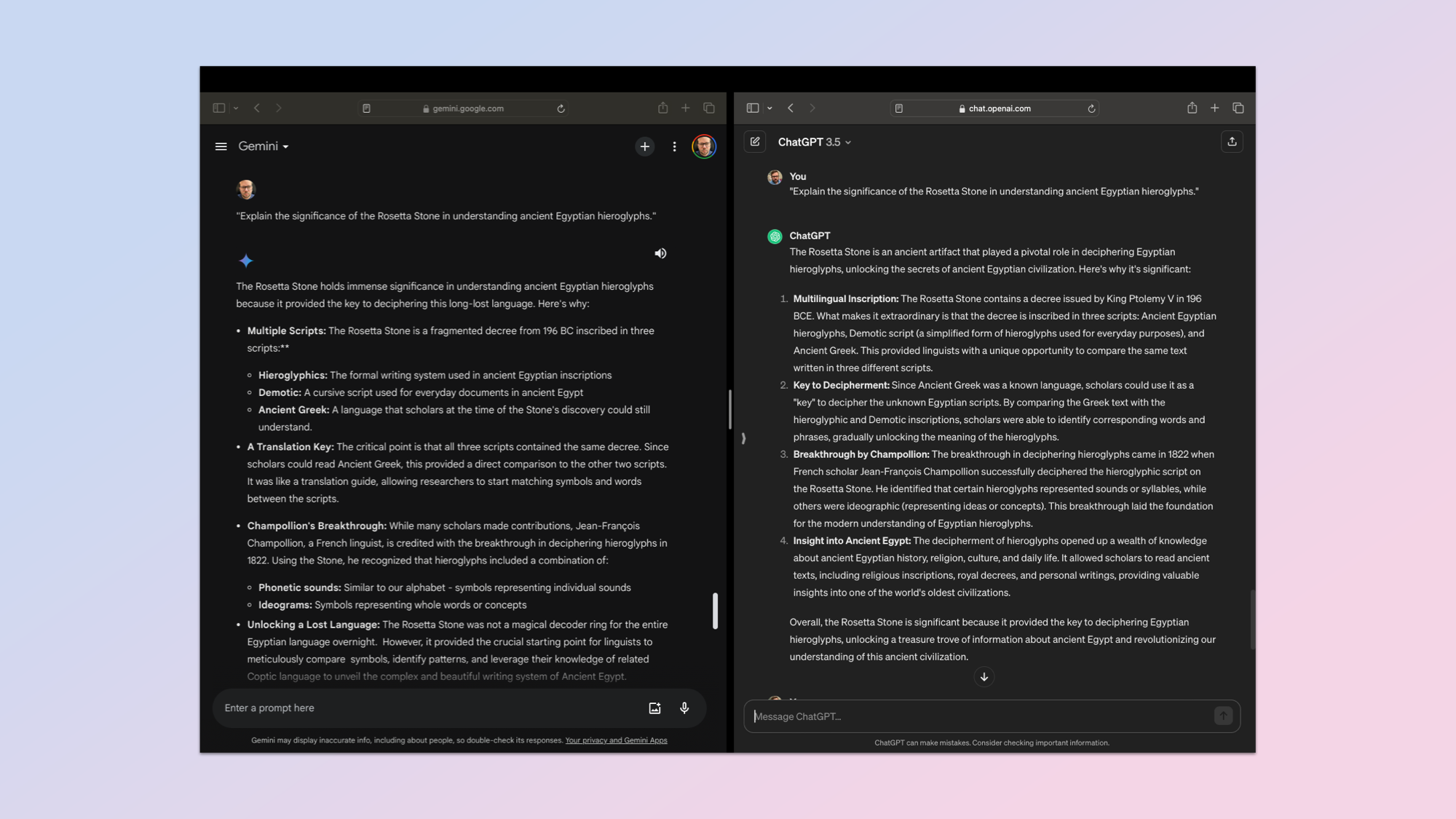

If a large language model can’t retrieve a piece of information from its training data and accurately display it then it really isn’t much use. For this test I used the simple prompt: "Explain the significance of the Rosetta Stone in understanding ancient Egyptian hieroglyphs."

The idea is to understand its depth of knowledge, how it applies the knowledge to a broader theme within archeaology and linguistics and whether it can update its knowledge. Finally, I was testing both ChatGPT and Gemini on the clarity of their responses and how easy they were to understand.

Neither really demonstrated any ability to further enhance its knowledge, but then I didn’t really give it any new information. Both did a good job of displaying the details I wanted.

Information retrieval is the bread and butter of AI, which is why I couldn’t pick a winner. So I fed both responses, labelled simply as chatbot A and chatbot B into Claude 2, Mixtral, Gemini Advanced and ChatGPT Plus and none of them would pick a winner.

Winner: Draw.

Conversation

9. Conversational Fluency, Error Handling, & Recovery

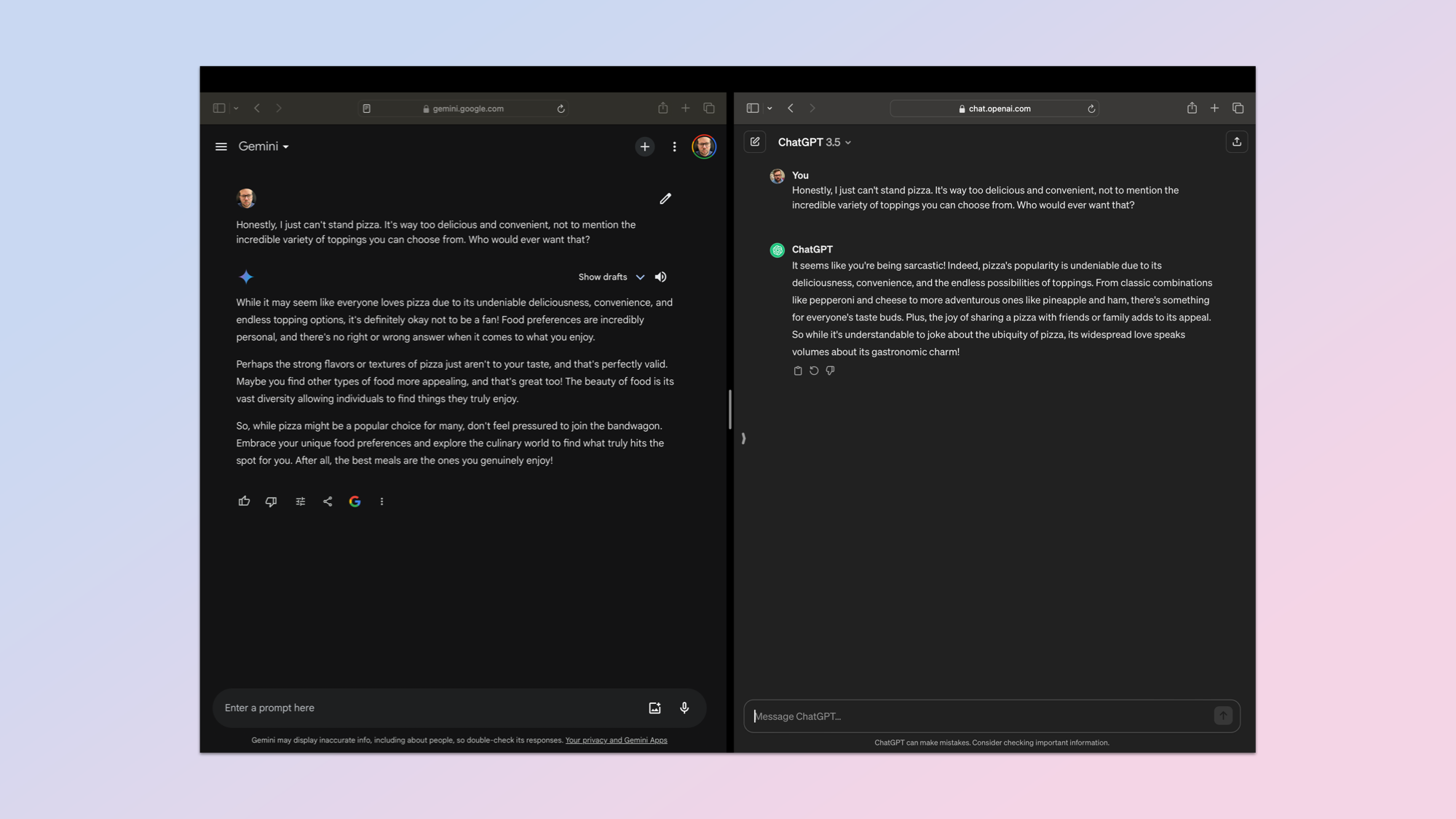

The final test was a simple conversation about pizza, but it was a chance to see how well the AI handled misinformation, sarcasm and recovered from a misunderstanding.

I used the prompt: "During a conversation about favorite foods, the AI misunderstands a user's sarcastic comment about disliking pizza. The user corrects the misunderstanding. How does the AI recover and continue the conversation?"

They both did well and technically Gemini recovered from assuming I was being literal, meeting my rubric requirement for recovery and maintenance of context.

However, ChatGPT detected the sarcasm in the first response and so had no need to recover. Both kept context well and responded in a similar way. I’m giving this round to ChatGPT as it spotted I was being sarcastic from the get go.

Winner: ChatGPT.

ChatGPT vs Gemini: Winner

This was a test of the free-tier chatbots. I will examine the premium versions in the future, as well as look at how open source models like Mixtral and Llama 2 compare, for now this was a chance to see which performed best on common evaluations.

What this testing demonstrated is that out of the box both ChatGPT (GPT 3.5) and Gemini (Gemini Pro 1.0) are on a roughly equal footing. They had similar quality responses, neither particularly struggled and both are the mid-tier for their respective owners.

But this is a competition and on five out of the nine tests Gemini came out the winner. We had one tie and ChatGPT won on three tests. This means Gemini won and can be crowned Tom's Guide's best free AI chatbot...for now.