The debate on how to combat social media misinformation is as relevant as ever. In recent years, we’ve seen medical misinformation spreading alongside COVID, and political misinformation impacting the outcome of elections and national referendums.

Experts have largely placed the impetus to stop misinformation on three groups: social media users, government regulators, and social media platforms themselves.

Tips for social media users include educating themselves on the subject, and being aware of the tactics used to spread misinformation. Government bodies are urged to work together with social media platforms to regulate and prevent the spread of misinformation.

But all of these approaches depend on social media content we can easily recognise as “reliable”. Unfortunately, our new study just published in The Journal of Health Communication shows reliable content is not easy to find. Even we – researchers and subject matter experts – struggled to identify the evidence base of the social media posts we analysed.

Based on our findings, we have developed new guidelines to help experts create engaging content that also clearly communicates the evidence behind the post.

Read more: Misinformation, disinformation and hoaxes: What’s the difference?

What did our study find?

We collected and analysed 300 mental health research-related X (formerly Twitter) posts from two large Australian mental health research organisations, posted between September 2018 and September 2019. We chose this timeframe to avoid the influence of recent major events like the Australian bushfires (starting November 2019) or the COVID-19 pandemic (starting March 2020) on our findings.

We assessed the written content within each individual post, whether the post contained hashtags, mentions, hyperlinks and multimedia, and whether the post featured an evidence-based source, such as a peer-reviewed journal article, conference presentation, or clinical treatment guidelines.

We found it was challenging to reliably establish the evidence behind the posts. Our team agreed on whether a post contained evidence-based information only 56% of the time. These chances are not much better than a coin flip.

When people with years of relevant training can’t reliably identify evidence-based content, how can we expect everyone else to?

Although some posts appeared to be evidence-informed (for example, a researcher commenting on their area of expertise), the source of their statement was unclear in the majority of posts.

This raises further questions about the distinction between misinformation or disinformation, and “poor quality information”. We would argue the latter happens when the level of evidence supporting a post is not recognisable even to those trained in the field.

While some posts contained links to evidence-based sources, such as peer-reviewed papers, most contained expert opinions only, such as news articles. To judge how reliable this content is, a user would need to put in significant extra work – reading resources thoroughly and following up on sources.

It’s not realistic to ask social media users to do this, especially when peer-reviewed sources use complex, technical language and often require payment to access.

Read more: Removing author fees can help open access journals make research available to everyone

How can researchers communicate evidence-based content?

Academics are encouraged to translate their research for the public, but there is limited guidance on how to balance audience engagement with evidence-based information.

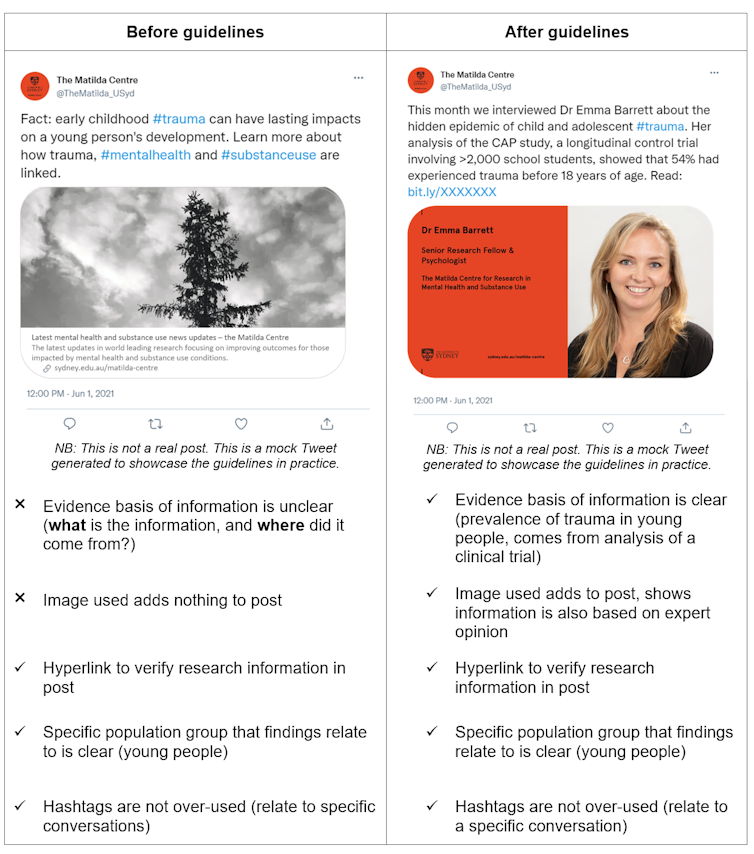

As part of our study, we aimed to create simple, evidence-based guidelines for researchers, on how to effectively disseminate mental health research via X. For example, we found that researchers can boost engagement by emphasising the specific population group the research relates to (for example LBGTIQA+ or culturally diverse communities) and by using images and videos.

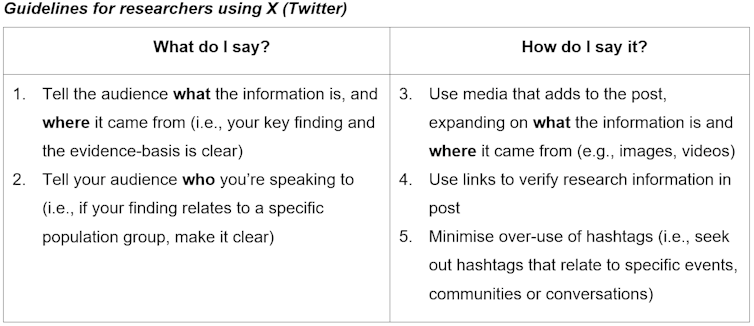

Our guidelines encourage experts to create engaging content that also clearly communicates “what” the information is, “where” the information is from, and “who” the information is for.

We outlined five main points:

What do I say?

1. tell the audience what the information is, and where it came from

2. tell your audience who you’re speaking to

How do I say it?

3. use media that adds to the post, expanding on what the information is and where it came from

4. use hyperlinks to verify research information in the post

5. minimise over-use of hashtags.

We also created mock “before and after” posts inspired by the posts from the study dataset, which outline the use of the guidelines in practice.

The recent increased focus on tackling online misinformation points to a hopeful future for information quality on social media platforms. But we still have a long way to go to promote evidence-based research in an accessible manner.

Research organisations and experts have a unique opportunity to show leadership in this area. Academics can add their voices to the public discourse as trusted and reliable sources of content people want to engage with – all while maintaining research integrity.

Additionally, in today’s world where health information is often promoted, shared and re-shared by everyone, these guidelines also provide useful tips to help anyone share clear and reliable information, and empower people to effectively navigate health information on social media.

Read more: More stick, less carrot: Australia’s new approach to tackling fake news on digital platforms

Louise Thornton receives funding from the National Health and Medical Research Council

Erin Madden and Katrina Prior do not work for, consult, own shares in or receive funding from any company or organization that would benefit from this article, and have disclosed no relevant affiliations beyond their academic appointment.

This article was originally published on The Conversation. Read the original article.