Apple is something of a latecomer to the large language model (LLM) scene, lagging behind Google, Microsoft and Meta in creating powerful AI tools, but it seems to be catching up quickly.

Earlier this year CEO Tim Cook told investors that there would be a significant announcement around AI that was a “major breakthrough”. Many suspect this will be a new version of Siri powered by an LLM similar to Google’s replacing Assistant with Gemini.

Apple researchers have just revealed details of what could be the basis of this next-generation Siri, and if rumors are true, could work alongside Gemini on the iPhone offering a choice.

Released as a preprint research paper, MM1 essentially offers a new method for using AI-generated data and labels to speed up training of new models — including possibly Siri 2.0.

What is Apple MM1?

At the core MM1 is a new method for training multimodal models using synthetic data including images and text.

The researchers behind MM1 claim their new method speeds up performance and reduces the number of follow up prompts to get a desired result.

Being able to improve prompt understanding and get to the desired output with as little interaction with the AI as possible is perfect for consumer tech, especially in Siri which will be used by a wide group of people with varying degrees of technological prowess.

The models achieve state-of-the-art pre-training metrics and competitive performance on multimodal benchmarks after fine-tuning.

MM1 seems to be a family of AI models, with the largest around 30 billion parameters. This is significantly smaller than the trillion plus parameters in GPT-4 and Claude 3 Opus but the researchers still claim to match key benchmarks due to improvements in efficiency.

“By scaling up their recipe, they built MM1, a family of multimodal models up to 30B parameters that achieve state-of-the-art pre-training metrics and competitive performance on multimodal benchmarks after fine-tuning,” they wrote.

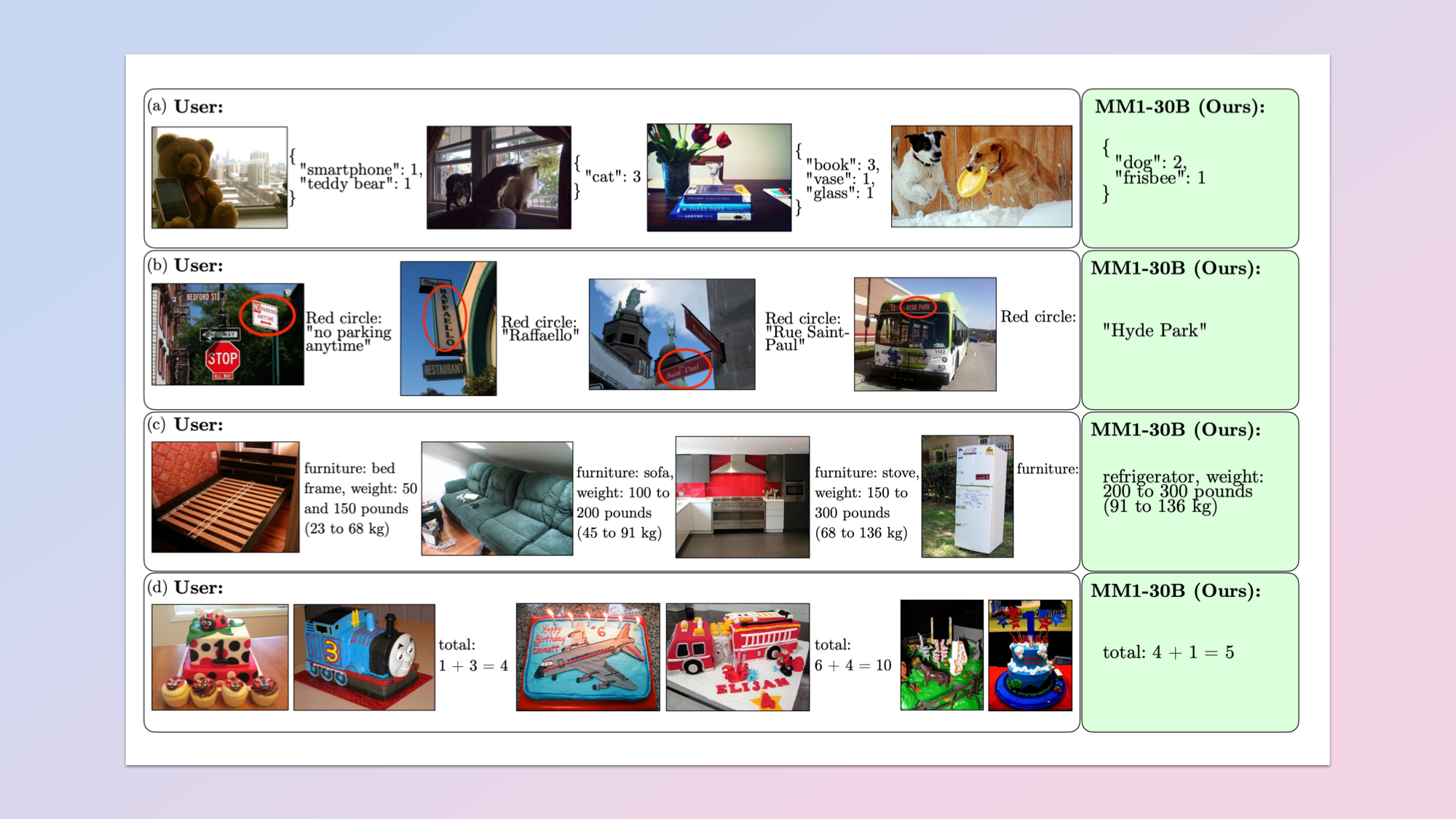

The significant breakthrough is in vision, specifically analysis of images and other forms of visual content and the ability to understand the output. I recently tested how well ChatGPT, Claude and Gemini perform at this task.

How does Apple MM1 work?

The full title of the paper is Methods, Analysis and Insights from Multimodal LLM Pre-training. It was quietly released with minimal fanfare and available open source with full details of training data and benchmarks.

In it researchers argue that combining different types of training data and model architectures — instead of relying on a single concept — can lead to state-of-the-art performance.

The team wrote that they used a mix of image-caption, image-text and text only data and that a "diverse dataset spanning visual and linguistic information" is required to get that performance.

This includes image captioning, visual question answering and natural language understanding — such as for one-shot or few-shot prompts to get a desired output.

“Thanks to large-scale pre-training, MM1 enjoys appealing properties such as enhanced in-context learning, and multi-image reasoning, enabling few-shot chain-of-thought prompting,” the team explained.

What makes Apple MM1 different?

MM1 uses a different type of architecture to toher models including higher image resolution encoders, takes a different approach to pre-training and labelling and focuses on using that data mix to improve overall performance from a single prompt.

It also uses a mixture-of-experts (MoE) model to scale up while keeping the processing requirements down, which further hints at its potential use on devices like iPhones or laptops, rather than running in the cloud.

Google recently leveraged a MoE architecture in its Gemini 1.5 Pro model with a more than one million token context window. This allowed it to improve efficiency over singificant input data.

Will Apple MM1 power Siri 2.0?

While the paper doesn’t mention Siri or any potential product, the focus on performance and efficiency, achieving solid results with minimal prompting and the need for extensive multimodal capabilities does hint at the direction Apple will go with Siri in the future.

It is likely that many of the features of any LLM-powered Siri will have to run “on device”, particularly around processing personal information due to Apple’s longstanding privacy stance.

Being able to develop a very powerful model, capable of learning from interactions with users and that is small enough to run on an iPhone is a big move.

With the recent news that Apple may be bringing Gemini to the iPhone, and previous remors that the company is also in talks with ChatGPT maker OpenAI, it looks like Apple is taking a multi-faceted approach to achieving the “big bang” Cook promised investors in AI.